A collaboration between PTA and Statista

This blog series provides the latest figures, trends and forecasts on the use of AI technologies in companies. With fact-based findings, we provide well-founded insights to make the latest advances in AI understandable and tangible. The series is produced in cooperation with Statista.

Agentic AI can do more than just provide answers: AI agents can plan tasks, use tools and initiate processes. This creates real added value – but also places new demands on the necessary infrastructure. Because as soon as agents “act”, good prompts are no longer enough. What is needed now is a stable data foundation, clear rules and well-trained employees.1

Context instead of gut feeling: knowledge must be findable and resilient

Many companies already have the necessary information, but it is spread across many sources and formats: Guidelines as PDFs, process details on the intranet, master data in the ERP, exceptions in emails. For Agentic AI, it is crucial that knowledge is findable, up-to-date and verifiable.

A pragmatic approach is “grounding” via retrieval augmented generation. The system searches for suitable content from internal sources and uses this as the basis for decisions and answers. For this to work reliably, data sources must be maintained, structured and access must be properly regulated.2

Agents are not allowed to do “everything”: secure tool use is mandatory

The biggest difference between chat and agent is the action. An agent can create tickets, change data, prepare orders or start workflows. That’s why it needs to be clarified early on: Which tools is the agent allowed to use? With what rights? And when does it need approval?

A simple scoping exercise is helpful here. How much control does the company have over the model, data and application and what security measures can be derived from this? AWS offers a scoping matrix as a guide (from “external service” to “own models”). This is practical because it makes the security effort and responsibilities tangible before scaling.3

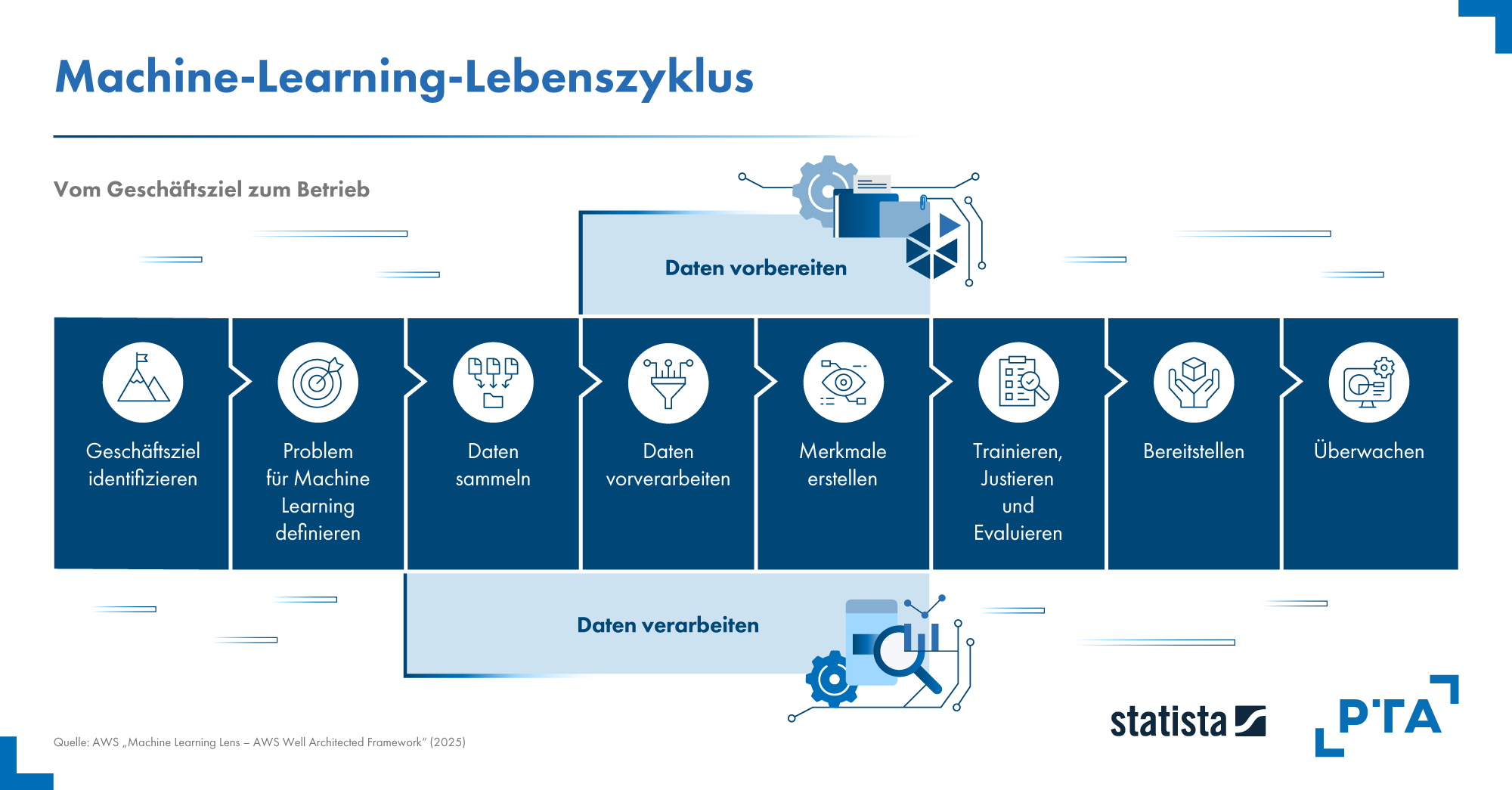

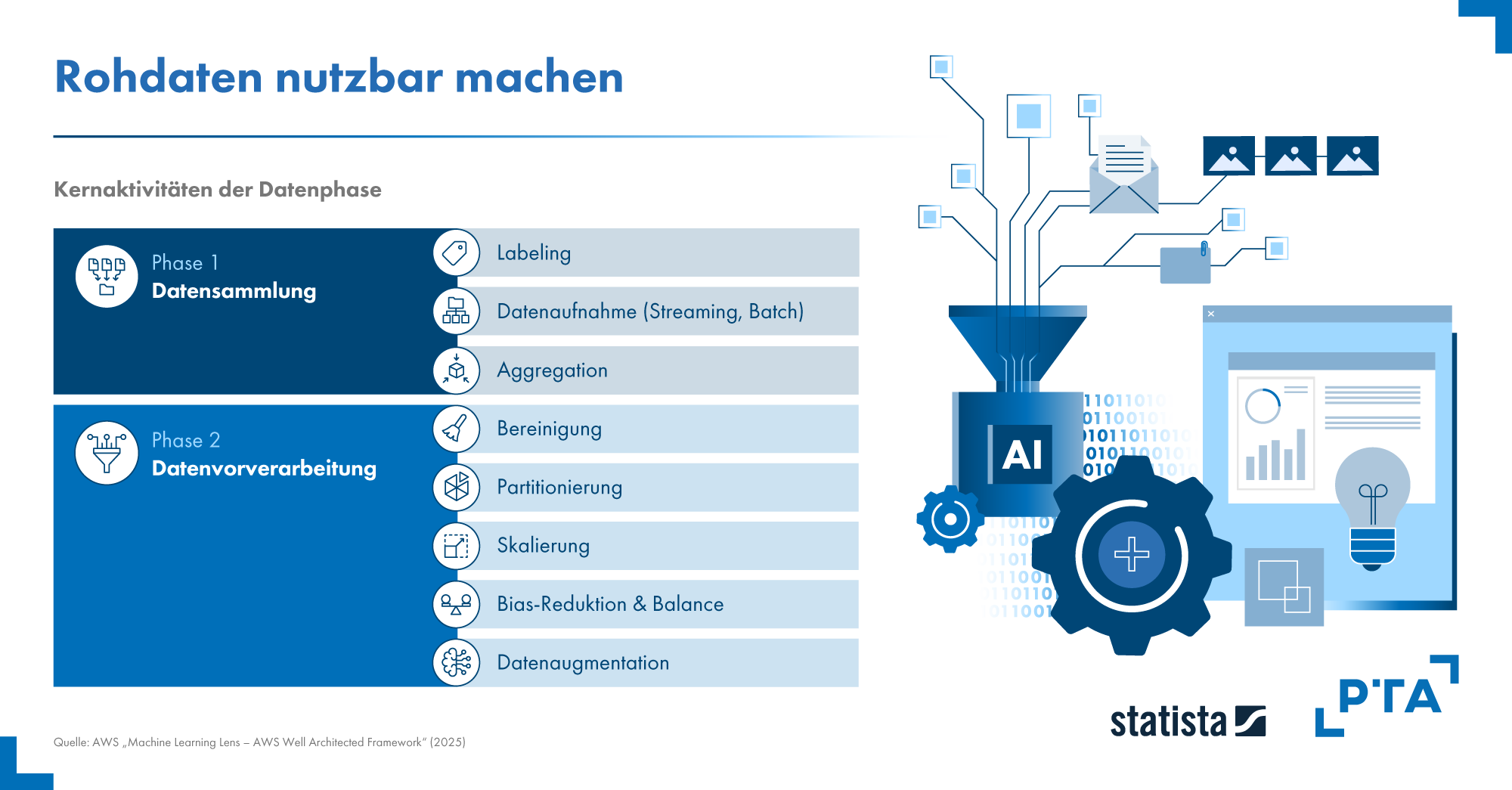

Data collection and processing for machine learning4

Make responsibility visible: Rules, evidence, clear responsibilities

As soon as agents are involved in core processes, typical questions arise from a management and compliance perspective. Who is responsible for the use case? How is it documented? How do we deal with risks? And how do we demonstrate this in the audit? Established points of reference can help here. NIST has published a profile for generative AI that structures typical risks and countermeasures along the life cycle. The ISO/IEC 42001 standard describes how organizations can set up, operate and continuously improve a management system for AI. Both are less “theory” and more a good checklist for setting up responsibilities and minimum standards properly.4,6,7

Operation instead of experiment: measuring, testing, monitoring

AI systems do not always deliver identical results. A one-off test is therefore not enough. Companies need clear processes to keep a constant eye on quality and operation.

AI agents can select different tools depending on the task, carry out steps in parallel and adapt their approach iteratively. This increases the need for transparency: it must be possible to understand which decisions are made, which tools are used and how behavior changes over time.

Practical guidelines therefore recommend operating AI like other business software – with clear testing, change and monitoring processes so that control is maintained even as autonomy grows.2,3,5

First the foundation – then the autonomy

Agentic AI is effective when it is securely embedded in the company’s IT: with a good knowledge base, clear access rights, comprehensible rules and an operation that has quality and costs under control. Those who lay these foundations early on will get agents out of the pilot faster and significantly reduce subsequent rework and risk.

¹ OpenAI “A practical guide to building agents” (PDF) (2025): Patterns, best practices and guardrails for agents (incremental build, secure execution). OpenAI.

² Google Cloud “Deploy and operate generative AI applications” (2024): Operation, deployment and processes for productive GenAI applications (DevOps/MLOps adaptation). Google Cloud.

³ Microsoft “AI workloads on Azure – Azure Well Architected Framework” (2024/2025): Architecture and operational guidelines for AI workloads (design, operations, testing/evaluation). Microsoft Learn.

⁴ AWS “Machine Learning Lens – AWS Well Architected Framework” (2025): Best practices for building and operating ML/AI workloads across the lifecycle. AWS.

⁵ AWS “Generative AI Security Scoping Matrix” (ongoing): Orientation for the classification of GenAI use cases and derivation of suitable security controls. AWS.

⁶ NIST “AI RMF: Generative AI Profile” (NIST AI 600 1) (2024): Risks and countermeasures for GenAI along the life cycle. NIST.

⁷ ISO/IEC 42001:2023 (2023): Requirements for an AI management system (AIMS) for the establishment, operation and continuous improvement of AI in organizations. ISO.